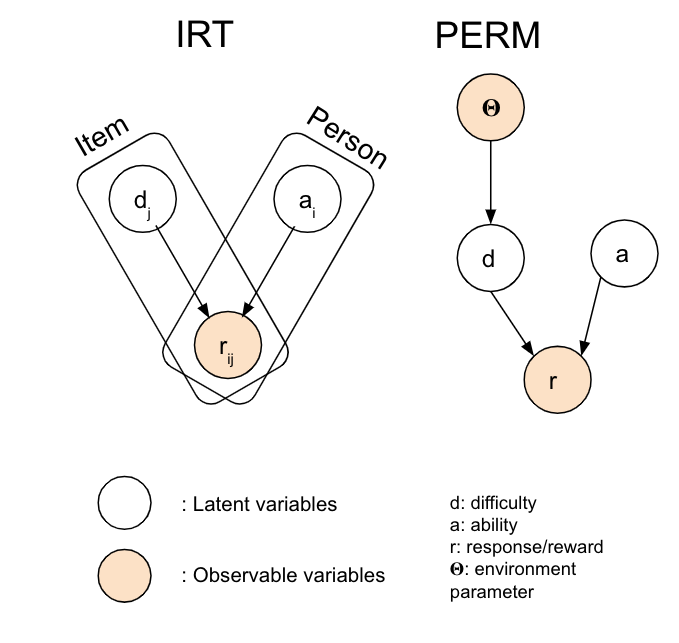

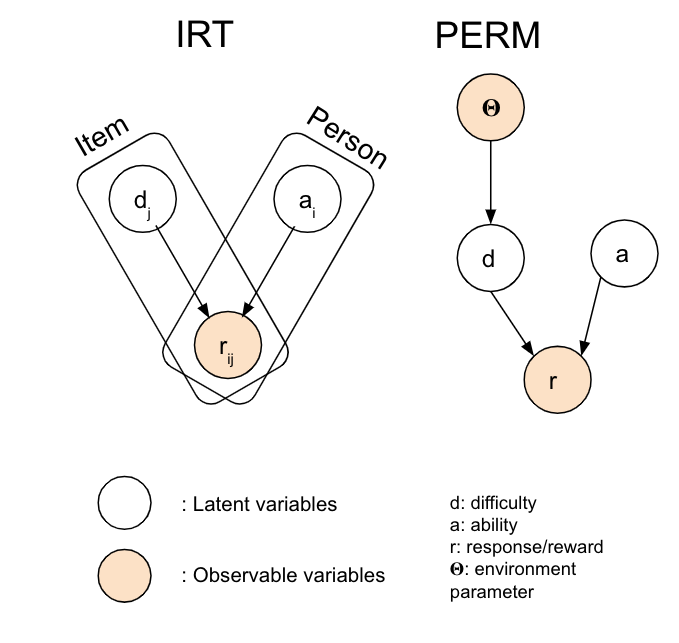

Graph representation of classic Item Response Theory and PERM

Related Links

Coming Soon!

Advancements in reinforcement learning (RL) have demonstrated superhuman performance in complex tasks such as Starcraft, Go, Chess etc. However, knowledge transfer from Artificial "Experts" to humans remain a significant challenge. A promising avenue for such transfer would be the use of curricula. Recent methods in curricula generation focuses on training RL agents efficiently, yet such methods rely on surrogate measures to track student progress, and are not suited for training robots in the real world (or more ambitiously humans). In this paper, we introduce a method named Parameterized Environment Response Model (PERM) that shows promising results in training RL agents in parameterized environments. Inspired by Item Response Theory, PERM seeks to model difficulty of environments and ability of RL agents directly. Given that RL agents and humans are trained more efficiently under the zone of proximal development, our method generates a curriculum by matching the difficulty of an environment to the current ability of the student. In addition, PERM can be trained offline and does not employ non-stationary measures of student ability, making it suitable for transfer between students. We demonstrate PERM's ability to represent the environment parameter space, and training with RL agents with PERM produces a strong performance in deterministic environments. Lastly, we show that our method is transferable between students, without any sacrifice in training quality.

Graph representation of classic Item Response Theory and PERM

Coming Soon!

@article{tio2023transferable,

title={Transferable Curricula through Difficulty Conditioned Generators},

author={Tio, Sidney and Varakantham, Pradeep},

journal={IJCAI},

year={2023}

}